In December 2025, I declared Devin to be cooked. Why? Because I made my own Devin that runs Claude Code in a Modal Sandbox. And it took less than a week. That MVP was pretty solid, but in the intervening months, I've spent many weekend hours building the last 20% of this "product" (no, you can't buy it, it's just for me). So, it's about time for a follow-up post documenting the challenges I ran into with the initial version, and all the extra features that have been sprinkled on top as a result.

Where We Left Off

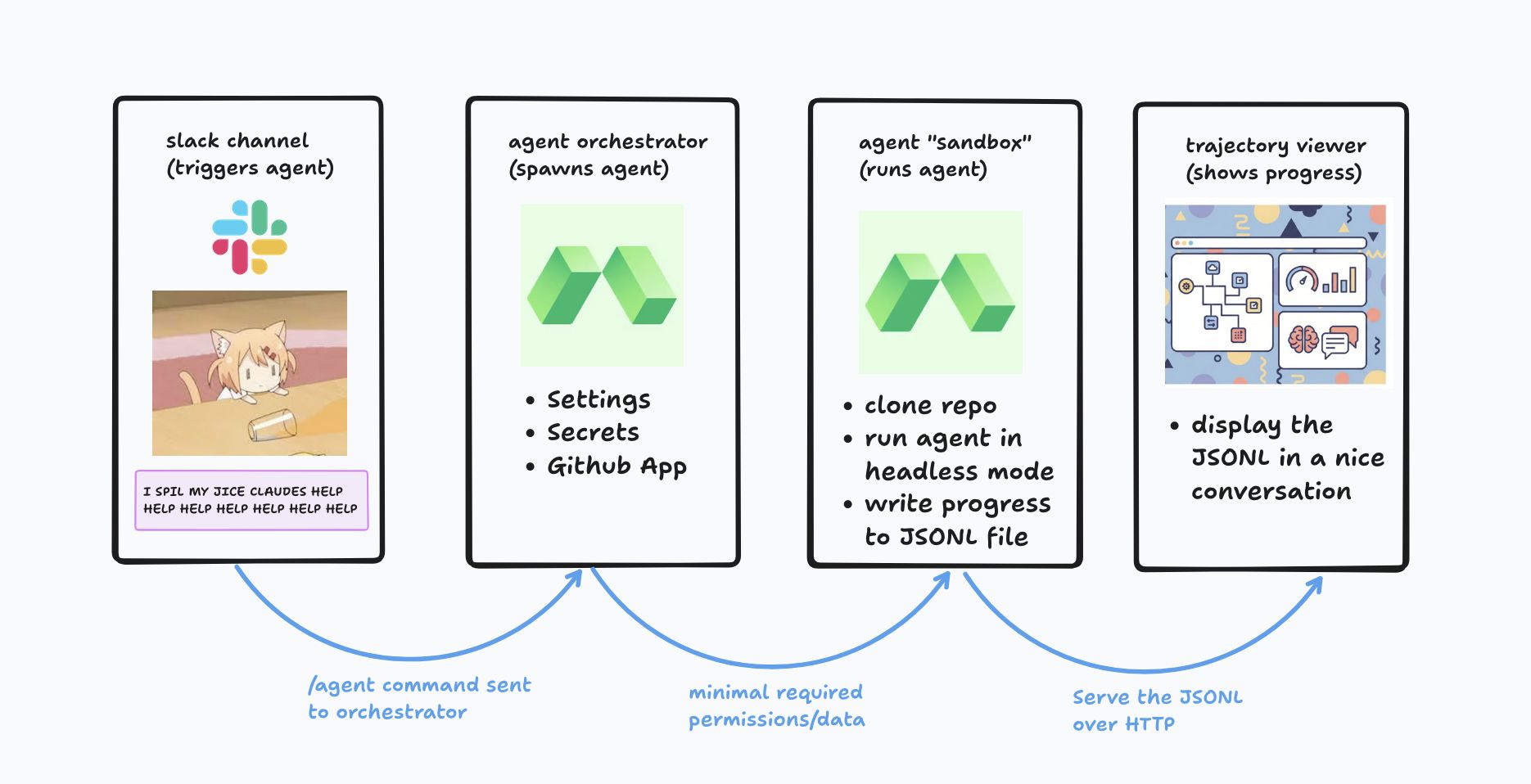

In case you forgot, or are not already a diligent reader of my blog, this is what we built before:

It's crazy simple. A Slack app listens for messages. When the agent is summoned on Slack, you make a sandbox, you run Claude Code (or Codex, or Cursor, or Gemini (lol)) in the sandbox, and you stream the output to S3 so you can watch it work if you want. When it's done, a script pushes the resulting code to Github, and it's ready for review. Yay!

The basic thesis behind why this is a good idea is: (a) models are trained to work well in their own harnesses, so it's good to work with the harness rather than against it; and (b) using Anthropic's harness is the only sanctioned way to consume subsidized Anthropic tokens, which are strictly better than the unsubsidized ones. (It can also use AWS Bedrock credits, which are 100% subsidized.)

But one quickly runs into problems and limitations with kicking off a model in an environment where it doesn't have any of the affordances it has when running locally, which ends up forcing you to get involved more than you should for an agent that's supposed to be, y'know, asynchronous. The rest of this post is about some of these rough edges and how I sanded them down.

Skills! Skills! Skills!

I'm a shill for skills. "A skill is just a prompt." No. You're dumb. I should do a whole post about the proper way to make skills, but suffice it to say, my skills are not just prompts; they are usually built around a CLI that can be installed with uv tool install, which exposes a handful of opinionated, helpful commands that allow a model to get stuff done (examine a PDF, call a specific search API, create a Word Document) without having to figure out how to do it from scratch and waste a bunch of time along the way. Skills are the greatest thing ever, and every coding agent needs them.

It's easy to install skills locally. But how do you provide them to a cloud agent? You can just use repo-level skills, but I have a lot of skills that are used across many repos. You could attach the same shared bundle of skills for every coding agent run, but then there would be all these distracting, irrelevant skills, making your Opus confused and stupid. Instead, I settled on baking a "library" of skills into the sandbox image. When you invoke a model via Slack, you can specify which skills to give it by name with -s [name of skill]. Then, the agent spawns with the skill copied to ~/.claude/skills. Superpowers, engage!

Thanks to skills, I can now send my coding agent to autonomously use a browser to enrich sales leads, scrape files from an ancient government portal, or dissect a PDF to find all the form fields. Coding agents with skills are, in fact, everything agents.

Automatic Code Review

Is it just me, or has Opus gotten dumber? JK. But also not really JK. Models make mistakes. Other models (especially Codex) can catch them. That's why we need automated code review. I've talked before about how I have Codex set up with a post-commit hook to run a code review on every single commit I make locally. But my cloud agent was opening PRs that hadn't gone through the same level of scrutiny, and that made me sad.

I fixed this by adding a "review" step that runs by default after every "implementation" step. I have a vision for a less-stupid version of a Ralph loop that alternates review steps with implementation steps, but for now, there's just 1 of each. Github is used for coordination. Once the PR lands, a new session kicks off, checks out the PR branch, reviews everything, and leaves comments on the PR. Then, when I open it up on Github, I already have a good sense of whether it's merge-worthy, and if there's nothing blocking, whether there are some small nits to clean up after merging. This has been extremely helpful.

Branches and PRs

Did I mention that code review was great? It's so great that sometimes I want to add some extra code review to my code review. The easiest way to do that is to point my Slack coding agent at my PR. Enter the --pr flag. Now I can simply put a link to the PR, the repository and branch are inferred, and everything is checked out into the agent's environment. It has sufficient Github permissions to leave comments. Now, even when I'm working on something locally, if I'm scared to merge something, I create a PR and then send 1 Claude, 1 Codex, and 1 Composer agent to inspect every nook and cranny of my code.

Besides code review, it's also useful to be able to point the model to branches other than main, and decide whether the changes should land on the same branch or a new branch; this can now be set with --src-branch and --dest-branch. This allows you to start a new agent that can pick up where a previous one left off, and even address PR comments. The agent is (currently) blocked from pushing directly to main but with the current pace of model improvements... we'll see.

Scheduled Tasks

In SaaS, you either die a hero, or you have to build scheduled tasks. I'm still alive. So I had to build scheduled tasks. A scheduled task is defined as a Markdown file with frontmatter, followed by a prompt.

---

# Run daily at 8am Pacific

schedule: "0 8 * * *"

repo: my-org/my-repo

provider: codex

model: gpt-5.3-codex

reasoning_effort: xhigh

notify: on_fail

notify_style: summary

then:

- fix-bugs

---

Find all bugs in this codebase.

On the schedule determined by the frontmatter, it spawns an agent with all the arguments in the frontmatter, plus the prompt in the body. Pretty simple! Some of these are more QA/review tasks, and the notify/notify_style settings determine how verbose the Slack notifications are. You'll also notice the then argument. This triggers an additional workflow, which starts seeded with this workflow's output. In principle, this could be used to do some pretty wild stuff. For now it's mostly Find Bugs → Fix Bugs.

I probably have like 30-50 of these workflows now. I could literally spend all day every day just clearing tickets created by all these workflows finding inconsistent styles and useEffect race conditions... Sounds like I need more workflows to fix the bugs found by my workflows. But then I'd need more workflows to find the bugs introduced by the workflows fixing the bugs found by the workflows. Hmm.

Finally, with this volume of coding agent exhaust, I had to make a cute vibe-coded slop dashboard to visualize all the ad-hoc Slack runs and scheduled workflows. So I did that.

Agents Managing Agents via CLI

Galaxy brain time. At some point, one realizes Slack is not the end-all-be-all of interfaces. In the course of building this, I realized that time had come. It was time to let Claude invoke Claude. It was time to make a CLI. Mine is called ccr, which stands for Claude Code Remote (a misnomer at this point, but it stuck).

The CLI is exactly like Slack (type command, spawn agent), except it is a CLI, so you can use it without leaving your terminal, and also Claude and Codex can use it. (It comes with, you guessed it, a skill.) This is very powerful. Not only can I spin off side-tasks into the cloud as I work on a main task ("oh yeah, this file is still .jsx, spin up an agent to make it .tsx please"), but I can also do crazy amounts of work in parallel, like gathering a bunch of customer profiles from the web, checking hundreds of Word documents for errors, or exhaustively code-reviewing every file in a codebase. Each ccr agent lands a PR on Github (if necessary) which gets auto-reviewed, and then I just have to merge 20-50 commits (fun!). It's amazing.

What's Left

In the past few months, Claude Code Remote has gone from a silly little project that made a good snarky blog post, to a core piece of how I run my company. The most important things that made this possible can be collapsed into a few themes:

- (1) Reducing the activation energy to spawn agents (CLI, scheduled tasks). This was the impetus behind the MVP, but it took a lot more work to make it a reality.

- (2) Making it faster and safer to consume AI coding outputs (auto-review, PR spawning). It's easy to write way more code, but it's harder to use and merge it safely. If you aren't able to consume it as fast as it's produced, the PRs start to create mounting dread instead of joy.

- (3) Making the sandbox environment match the local environment (skills). When it feels like your cloud agents are hobbled, you're more likely to just use 12 tabs of Claude Code and crash your Macbook.

There's more to do on all of these. An important question I (and many others) are thinking about is how to safely provide secrets to a coding agent (e.g. OpenAI API key, access to production logs, etc.). (The accepted answers seem to be "don't do it" followed by a secret-broker proxy. Sounds complicated!) I'm also currently barely keeping up with all the workflow outputs, so more work is needed to figure out how to triage and address them faster. Otherwise, I might have to hire a founding engineer to help clear all the tickets. But no one wants to be a founding engineer, and they're way more expensive than Claude.

Check back in a few months for Part 3, maybe!